Table of Contents

Artificial intelligence has been transforming creative industries for years, but few technologies have sparked as much debate and excitement as AI voice cloning. While most conversations focus on concerns like deepfakes or the replacement of human voice actors, the reality on the ground is much more nuanced. For music producers, artists, and digital creators, AI voice cloning is opening up workflows that save time, cut costs, and allow for new forms of experimentation.

Instead of replacing people, these tools are increasingly acting as assistive technologies — helping podcasters polish episodes faster, enabling YouTubers to dub content into new languages, or allowing producers to create demo vocals without needing a singer on hand. For anyone working in music or creative media, the opportunities are surprisingly practical.

Here are five unexpected but increasingly common ways creators are putting AI-generated voices to work in their projects.

Podcasters Using AI Voice Cloning to Speed Up Production

Podcasting has grown into one of the most important channels for musicians and industry professionals. Many producers now run their own shows, offering breakdowns of their workflow, interviews with other artists, or commentary on music culture. The challenge, of course, is time. Editing hours of audio, re-recording lines, and maintaining consistency can eat into valuable studio sessions.

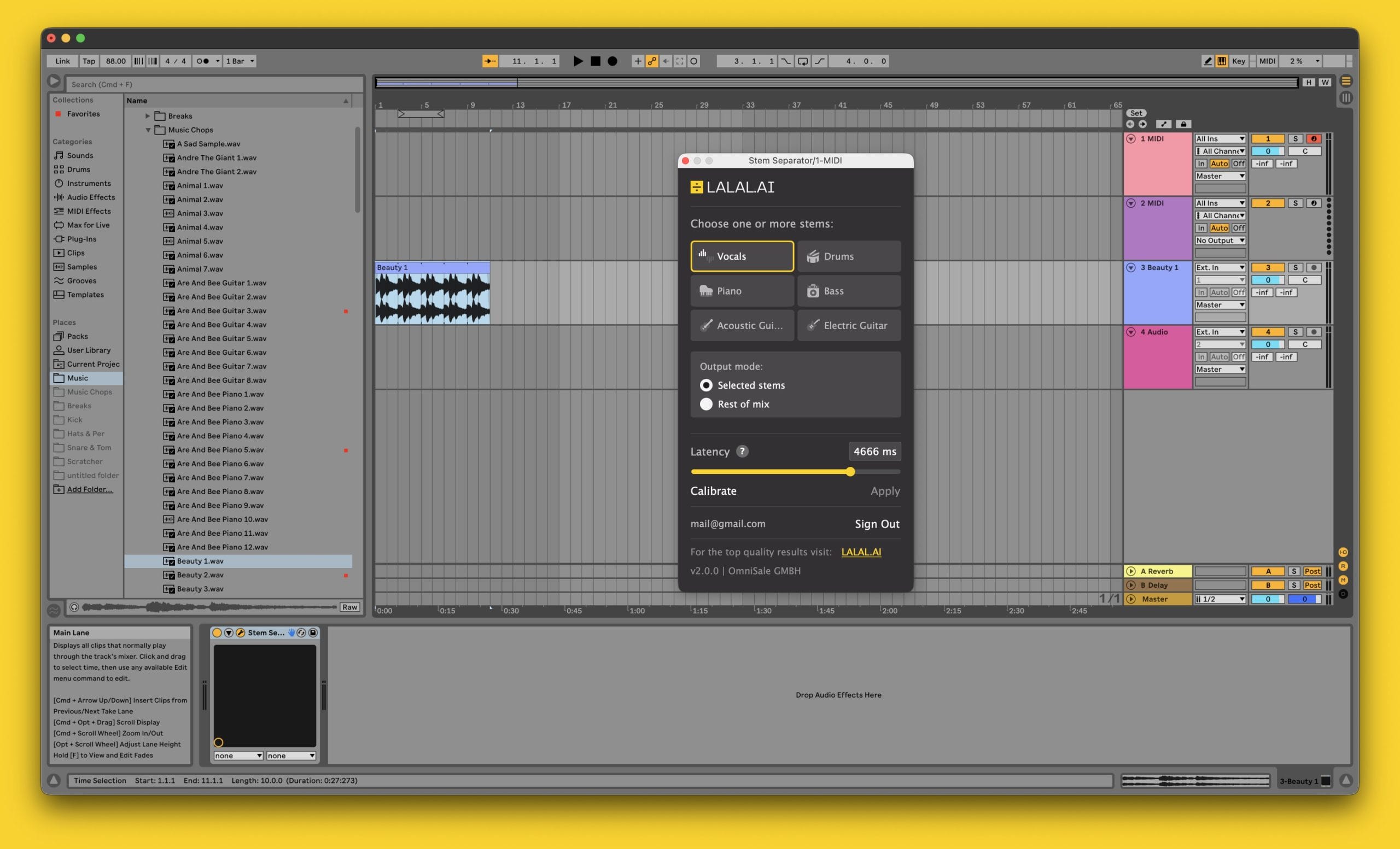

This is where AI voice cloning makes a real difference. Podcasters can record a clean vocal sample once, then use tools like LALAL.AI’s Voice Cloner to generate additional material in their own voice. Need to re-do an intro or fix a botched sentence? Instead of re-recording in the booth, creators can type the line and let the cloned voice drop it seamlessly into the mix.

For producers, this means less distraction from music-making and more efficient use of content time. Even better, cloned voices can generate placeholder drafts of entire episodes, allowing you to test pacing and structure before committing to a final recording. Think of it as a scratch vocal for podcasts, the same way you might use a rough demo vocal in your DAW session before tracking the real take.

YouTubers Dubbing Videos Into New Languages

YouTube is one of the most effective platforms for building an audience as a music producer. Tutorials, breakdowns, gear reviews, and “how it was made” videos often rack up thousands of views. But for producers trying to reach a global audience, language is still a barrier.

AI voice cloning solves this by allowing creators to dub their own videos into multiple languages without hiring outside talent. Services like LALAL.AI, combined with AI translation tools, can generate multi-language versions of your content while keeping it in your own voice. Instead of subtitles, international viewers can hear you explain your production workflow in Spanish, German, or Japanese, all while maintaining your personal tone and delivery.

For producers building a career internationally, this kind of multilingual presence is powerful. Labels, fans, and collaborators in other markets can experience your content more naturally, and you save the time and expense of outsourcing. In a music industry where streaming platforms are global from day one, this is a practical way to stay competitive.

Teachers and Educators Making Custom Study Tools

Many producers supplement their careers by teaching — whether through private lessons, online courses, or platforms like Skillshare and Patreon. The biggest challenge here is producing high-quality educational materials that don’t require endless recording and editing.

With AI voice cloning, educators can automate recurring content like instructions, FAQs, or lesson summaries. Imagine recording a core set of vocal samples, training a clone, and then using it to narrate practice exercises or create supplemental materials. Instead of having to re-record repetitive instructions, you can focus on developing the actual content.

Another powerful use: creating personalized resources for students. For example, a music production teacher could provide practice prompts in the student’s native language, generated with their own cloned voice. That adds a level of personalization and accessibility that previously would have been impossible without hiring translators and voice actors.

Authors and Producers Turning Written Work Into Audio

Beyond tracks and tutorials, many music producers write articles, blogs, or even books. Converting these written works into audiobooks or narrated blogs has traditionally been time-intensive and expensive, requiring voice talent and studio time.

AI voice cloning allows authors to narrate their work in their own voice, without spending weeks recording in a studio. Producers writing eBooks on mixing, guides to music business, or long-form commentary can now easily release audio versions for fans who prefer listening.

This isn’t limited to long projects. Even short-form content, such as a Substack newsletter or a blog post about new plugins, can be quickly converted into audio with a cloned voice. For readers who are also listeners, this provides an additional distribution channel, fostering stronger engagement.

For creatives, the takeaway is simple: if you’re already investing time into writing, cloning your voice gives you another medium without doubling your workload.

Influencers Enhancing Social Media With AI Narration

Social media is where many producers build their brand, and standing out in the endless feed is tough. AI-generated voiceovers provide influencers and musicians with an easy way to add personality to their posts without having to sit down and record every single clip.

Think about Instagram reels explaining a production trick, TikTok videos breaking down a new plugin, or even behind-the-scenes clips from the studio. Instead of always being on camera, producers can generate narration in their own voice to explain what’s happening. This keeps content personal and consistent, while reducing the performance fatigue that comes with daily posting.

AI voice cloning also enables faster experimentation. You can test different tones, phrases, or pacing without recording dozens of takes. For music producers trying to balance social media with actual production, this efficiency can make content creation sustainable.

The Risks and Considerations

While these uses are practical and exciting, music producers should approach AI voice cloning with awareness.

- Consent and Ownership – Never use someone else’s voice without explicit permission. In music, this extends to vocalists, collaborators, and even session singers.

- Quality Control – Poor training data or rushed use can lead to robotic, unnatural-sounding results. As with any creative tool, attention to detail matters.

- Transparency – Be clear with your audience when you’re using AI-generated voices. Fans appreciate honesty, and it helps avoid confusion or mistrust.

- Balance – Use AI as an assistant, not a replacement. Real human connection still drives engagement in music and creative communities.

Why Music Producers Should Pay Attention

For producers, the appeal of AI voice cloning goes beyond convenience. It’s about creating new layers of expression. Whether you’re teaching, publishing, podcasting, or simply trying to connect with your audience, your voice is part of your brand. Having the ability to extend that voice across formats, languages, and platforms is a powerful tool.

Just as MIDI once opened up new ways to compose and perform, AI voices are starting to play a similar role in communication. For busy producers who already juggle making tracks, performing, teaching, and building an online presence, voice cloning reduces friction. It turns hours of manual work into minutes of automation, freeing more time for music itself.

Conclusion

AI voice cloning is often framed as controversial, but in practice it is quietly becoming a workhorse tool for music producers and creatives. Podcasters can speed up their workflows, YouTubers can expand globally, teachers can provide personalized resources, authors can narrate their own work, and influencers can enhance their content pipelines.

For the creative community, these are practical solutions. By adopting AI voice tools responsibly, producers can scale their presence, preserve authenticity, and experiment with new formats without losing their focus on the music. The lesson is clear: in an era where attention is fragmented and content is global, finding efficient ways to extend your voice can make all the difference.

Magnetic byline note: This byline is used for staff produced updates and short announcements, often based on press materials and official release information. Editorial responsibility: David Ireland (Editor in Chief) and Will Vance (Managing Editor). About: https://magneticmag.com/about/ Masthead: https://magneticmag.com/masthead/ Contact: https://magneticmag.com/contact/